We’ve walked into mature SOAR programs with hundreds of playbooks, and the results often disappoint. Playbooks multiplied, but the results were not there. The incident queue kept growing faster than the code meant to tame it.

That gap is a big reason agentic SOC has become a serious topic for security teams in 2026. But the term gets used loosely enough that it's worth being precise. What does an agentic SOC actually mean? How does it differ from what security teams already have? And where does the human analyst fit in a model that's increasingly running on AI?

What is an Agentic SOC?

An agentic SOC is a security operations center where AI agents handle investigation, triage, and response tasks through dynamic reasoning rather than static, pre-written rules. The word "agentic" comes from AI research, where it describes systems that can set goals, plan sequences of actions, and adapt their approach based on what they find.

In practical terms, this means the AI reads the alert, decides what to look up, pulls relevant context from your EDR, SIEM, cloud resources, identities, code, threat intelligence feeds, and more, forms a hypothesis about what happened, presents its conclusions to a human with supporting evidence, and either takes action automatically or with a human in the loop.

How agency differs from simple automation

When a SOAR playbook fires, it follows a path defined by a human engineer at the time the playbook was written. The playbook simply checks if condition A matches, then it runs action B.

An AI agent does something structurally different. It receives a task, such as investigating a phishing alert, and then decides what steps to take. It might query Active Directory for the targeted user's role, check if the sending domain was registered recently, pull VirusTotal results for the attached file, and look for lateral movement from the same endpoint in the past 48 hours. If any of those lookups change the picture, the agent adjusts its approach.

This capacity for goal-directed, adaptive behavior is what separates AI security agents from the automation that came before them.

The 4 Core Traits: Autonomy, Planning, Reasoning, and Adaptability

Security platforms marketing agentic capabilities vary widely in what they actually deliver. The term is meaningful when it describes these four properties working together:

- Autonomy means the agent can complete multi-step tasks without a human approving each action. It decides which data source to query or tools to use and in what order.

- Planning means the agent can decompose a complex goal into sub-tasks. Investigating an alert becomes a sequence of lookups, correlations, and checks that the agent manages independently.

- Reasoning means the agent weighs evidence. It retrieves data and interprets it. "This is a true positive. The IP address matches a known bad actor, the user has no history of downloading that type of file, and the user bulk downloaded multiple of those files within 30 seconds" is a conclusion drawn from reasoning across multiple data points.

- Adaptability means the agent changes its plan based on the alert and environment. If the initial lookup reveals the alert fired about an AWS event, the agent adjusts its data gathering and assessment accordingly rather than continuing down an irrelevant path.

Agentic SOC vs. legacy SOAR and why the playbook is dead

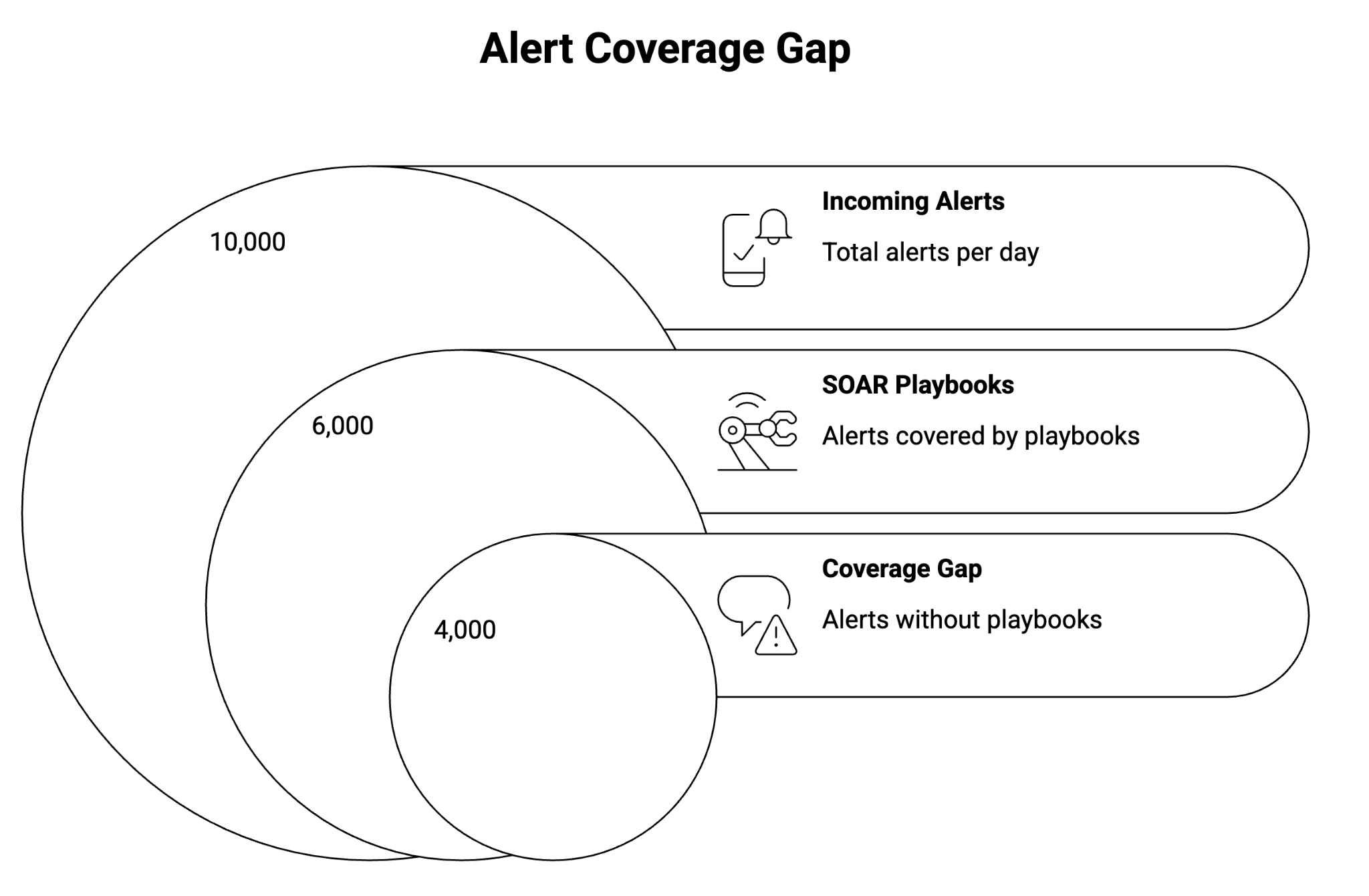

Teams have invested years building playbook libraries, and for well-defined, high-frequency tasks, deterministic automation still makes sense. But the coverage problem is real.

The core issue is that playbooks only work for alert types that are common enough and well-understood enough to have a playbook written for them. Everything else falls to human analysts, which means it often doesn't get investigated at all.

The proportion varies by organization, but the pattern is consistent. SOAR captures the frequent and the predictable. Agentic systems can handle a majority, including the tail and unique situations. An AI agent doesn't need a pre-written procedure for a specific alert combination it's never seen before. It can reason about unfamiliar situations the same way a good analyst would, by pulling relevant context and working through what it means.

Agentic SOC platforms report mean-time-to-triage dropping from hours to minutes for alert categories that previously had no automation coverage.

The other limitation of playbook-based SOAR is brittleness. When your environment changes (new SaaS tools, a cloud migration, an acquisition), playbooks break or stop being accurate. Maintaining them is a full-time job. AI agents are less sensitive to environmental drift because they're reasoning from current data rather than executing a procedure written against a prior state of your environment.

How an agentic SOC works

When an alert fires, it enters an orchestration layer that routes it to an appropriate AI agent. The agent reads the alert metadata and the associated raw log data, then begins executing an investigation plan.

The agent determines what information it needs based on the alert type and the data already visible. For a suspicious login alert, it might start with geolocation and device history. For a malware detection, it might start with the process tree and network connections made in the same window.

The agent uses a set of tools and data (identity provider, cloud data, resource configuration, threat intelligence feeds, ticketing system, etc.) to execute its investigation steps. Each tool call returns data that may inform the next step. When the agent reaches a conclusion, it can be supported with evidence. It then generates a human-readable summary and optionally takes a defined response action (containment, block, notification).

The quality of this loop depends heavily on data access. Agentic systems need unified telemetry. Siloed data environments, where your endpoint data and network data can't be correlated against a shared timeline, create blind spots that limit what an agent can reason about. This is driving the adoption of unique data architectures as the infrastructure for agentic SOC deployments.

The role of the human analyst in an agentic model

AI agents are not replacing SOC analysts, but it’s more complicated than just that. AI agents replace most of what Tier 1-2 analysts do today, while making the remaining human work more demanding.

The volume of manual triage and investigation work drops substantially in agentic deployments. That's the point. Agents handle the investigation steps that currently consume most of an analyst's day. What remains for humans is the judgment that agents aren't equipped to make and the creative tasks like threat hunting and detection engineering.

From operator to orchestrator

The analyst's role shifts from executing investigations to supervising agents, handling escalations, and running ad-hoc investigations. This requires different skills than traditional SOC work. Analysts need to understand how to interpret agent outputs, when to trust a conclusion, and when to dig deeper, how to write business context for agents, and how to think like a bad actor to find new and novel attacks.

Some security teams are finding that the agentic model exposes skills gaps. Analysts who were productive at high-volume triage aren't necessarily good at the investigation and judgment work that agentic systems escalate to them. This is a people and training problem as much as a technology one.

The concept of human-in-the-loop (HITL) in agentic SOC refers to where humans participate in the automated workflow. For high-confidence, low-risk actions (blocking a known-malicious IP, isolating a confirmed-infected endpoint), most organizations are moving toward a fully autonomous response. For higher-risk actions (disabling a user account, quarantining a server), human approval stays in the loop. The boundary is a policy question that each organization sets based on its environment and risk tolerance.

Transparency, governance, and the AI trust problem

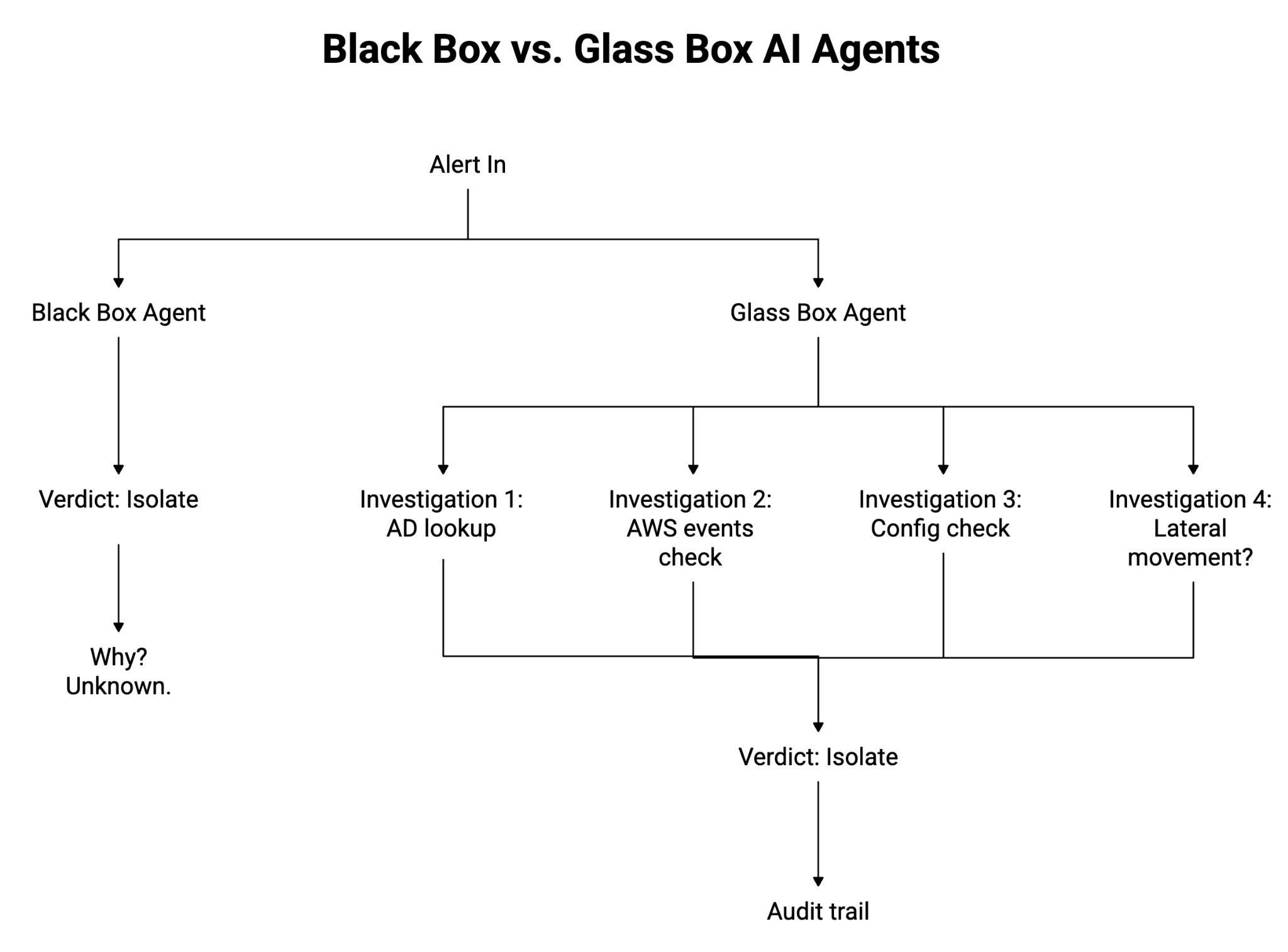

The biggest barrier to agentic SOC adoption in 2026 is the question of whether security teams can trust what agents decide, and whether they can explain those decisions when something goes wrong.

A SOC that can't audit its agents has a different kind of problem than a SOC without automation. If an agent incorrectly isolates a production server and you can't reconstruct why the agent made that call, you've lost the ability to prevent the same error in the future.

The questions to ask before adopting an agentic platform: Can you view a step-by-step record of every action an agent took during an investigation? Can you see which questions it asked and the resulting answers, and what it concluded from each? Can you define guardrails that prevent agents from taking specific categories of action without human approval? And when an agent makes an error, can you trace the failure point in its reasoning?

Glass box versus black box AI is a useful shorthand for evaluating these capabilities. A black-box agent gives you a conclusion but not the reasoning. A glass-box agent exposes its decision chain in a format that a human analyst can review and audit. For regulated industries where investigation procedures need to be defensible, glass-box is a requirement.

The governance infrastructure around AI agents is immature relative to the agent capabilities themselves. Organizations moving quickly toward autonomous response are often ahead of their ability to audit what their agents are doing. That gap is worth closing before incidents reveal it. The NIST AI Risk Management Framework offers a starting point for teams building governance policies around agentic systems.

The architectural happening in SOCs

Agentic SOCs are a new architectural center of gravity. Instead of treating alerts as the primary unit of work, an agentic SOC treats the investigation as the unit of work, where agents pull context across identity, endpoint, cloud, network, and SaaS, form hypotheses, test them, and produce an evidentiary narrative that a human can review. That shift only works when the environment supports reasoning from unified, queryable telemetry and an orchestration layer that decides which tasks can run autonomously versus which require human approval.

Good agentic SOC platforms are built for governed autonomy. If you can’t reconstruct why an agent escalated, contained, or closed an incident, you can’t trust it, tune it, or defend it. If you do, cycle times drop and analyst load lightens as a natural consequence; if you don’t, you’ll mostly end up with a smarter interface on top of the same backlog.

Frequently Asked Questions

What is an agentic SOC?

An agentic SOC is a security operations center where autonomous AI agents handle detection, triage, investigation, and response without waiting for manual instructions at each step. Unlike rule-based automation, agents reason about context, infer intent, and act dynamically across cloud, SaaS, identity, and endpoint environments. The result is a SOC that operates continuously at machine speed while keeping human analysts in control of high-priority decisions.

What is the difference between an agentic SOC and SOAR?

SOAR executes predefined playbooks when specific conditions are met. It automates tasks, but only within rules someone wrote in advance. An agentic SOC goes further: AI agents reason about novel situations, weigh competing signals, and adapt their approach without requiring a pre-authored playbook for every scenario. Where SOAR is rule-driven, an agentic SOC is goal-driven.

What is the difference between an AI SOC and an agentic SOC?

An AI SOC is a broad term for any SOC that uses machine learning or AI-assisted tooling, including SIEM anomaly detection or AI-assisted alert scoring. An agentic SOC specifically describes a SOC where AI agents take autonomous, multi-step action across the full detection and response lifecycle, not just surfacing signals for human review. All agentic SOCs are AI SOCs, but not all AI SOCs are agentic.

Can an agentic SOC replace human analysts?

No. Agentic SOCs are designed to eliminate Tier 1 and Tier 2 busywork so analysts can focus on strategic threats, architecture decisions, and high-judgment calls that require human context. AI agents handle the repetitive, time-sensitive work: grouping alerts, running investigations, confirming users, and closing low-risk findings. Human analysts remain the decision-makers for escalations, novel attack patterns, and organizational risk assessments.

How does an agentic SOC reduce alert fatigue?

Agentic SOCs reduce alert fatigue by replacing alert-centric workflows with context-centric findings. Instead of surfacing hundreds of individual alerts, AI agents correlate related events, suppress known-benign activity through behavioral baselining, and deliver consolidated findings that include root cause, affected assets, and recommended response steps. Analysts see fewer, richer findings rather than a queue that grows faster than they can clear it.