Most breaches are not discovered by a single, perfectly labeled alert. They are often uncovered when someone connects weak signals, asks a sharper question, and follows the evidence. That is what threat hunting targets. Threat hunting aims to proactively identify what detections miss, before attackers complete their objectives.

Threat hunting can feel like an art, but the best teams run it like an engineering discipline. A strong threat hunt program has clear hypotheses, defensible scoping, fast ways to investigate, and outcomes that feed detections, response, and resilience.

What threat hunting is, and what it is not

Threat hunting is the proactive process of searching for adversary activity that has evaded preventive controls and routine detection logic. Rather than waiting for a high-confidence alert, hunters deliberately investigate behaviors, relationships, and anomalies that may indicate compromise, abuse, or exposure.

It is distinct from alert triage, which focuses on the reactive handling of queued detections; from compliance reporting, which centers on producing periodic evidence of control effectiveness; and from vulnerability management, which prioritizes and remediates known weaknesses, even though vulnerability data can inform hunt hypotheses.

A threat hunt leverages intelligence, telemetry, and operational context to determine whether an attacker is already present in the environment or positioning themselves to gain a foothold.

To anchor your program in widely used frameworks, many teams map hunts to MITRE ATT&CK tactics and techniques, which helps you define scope and expected evidence patterns.

Why threat hunting matters now

Three structural pressures have elevated threat hunting from an advanced practice to a baseline capability for mature security programs. First, modern attack paths are inherently cross-domain. Identity systems, SaaS applications, cloud control planes, endpoints, and code repositories now converge within a single kill chain. When telemetry and operational context remain siloed, it becomes materially difficult to investigate lateral movement or privilege escalation that traverses those boundaries.

Second, exploit velocity continues to compress. Publicly disclosed vulnerabilities and misconfigurations are rapidly weaponized, leaving defenders with a narrow window to assess exposure and identify suspicious follow-on activity. The Cybersecurity and Infrastructure Security Agency Known Exploited Vulnerabilities (KEV) catalog is a practical input to hunt planning because it tracks vulnerabilities that are actively exploited in the wild, allowing teams to prioritize investigation based on observed adversary behavior rather than theoretical risk.

Third, dwell time has not disappeared. Even as median detection metrics improve, attackers continue to capitalize on gaps in visibility, fragmented processes, and implicit trust relationships. Industry reporting consistently shows that real-world incidents remain numerous and operationally diverse. These conditions are precisely where proactive, hypothesis-driven investigation produces meaningful returns.

Threat hunting is the discipline that surfaces what automated controls miss, including overlooked persistence mechanisms, normal-looking administrative actions, suspicious OAuth consent grants, or token reuse patterns that never crossed a predefined detection threshold.

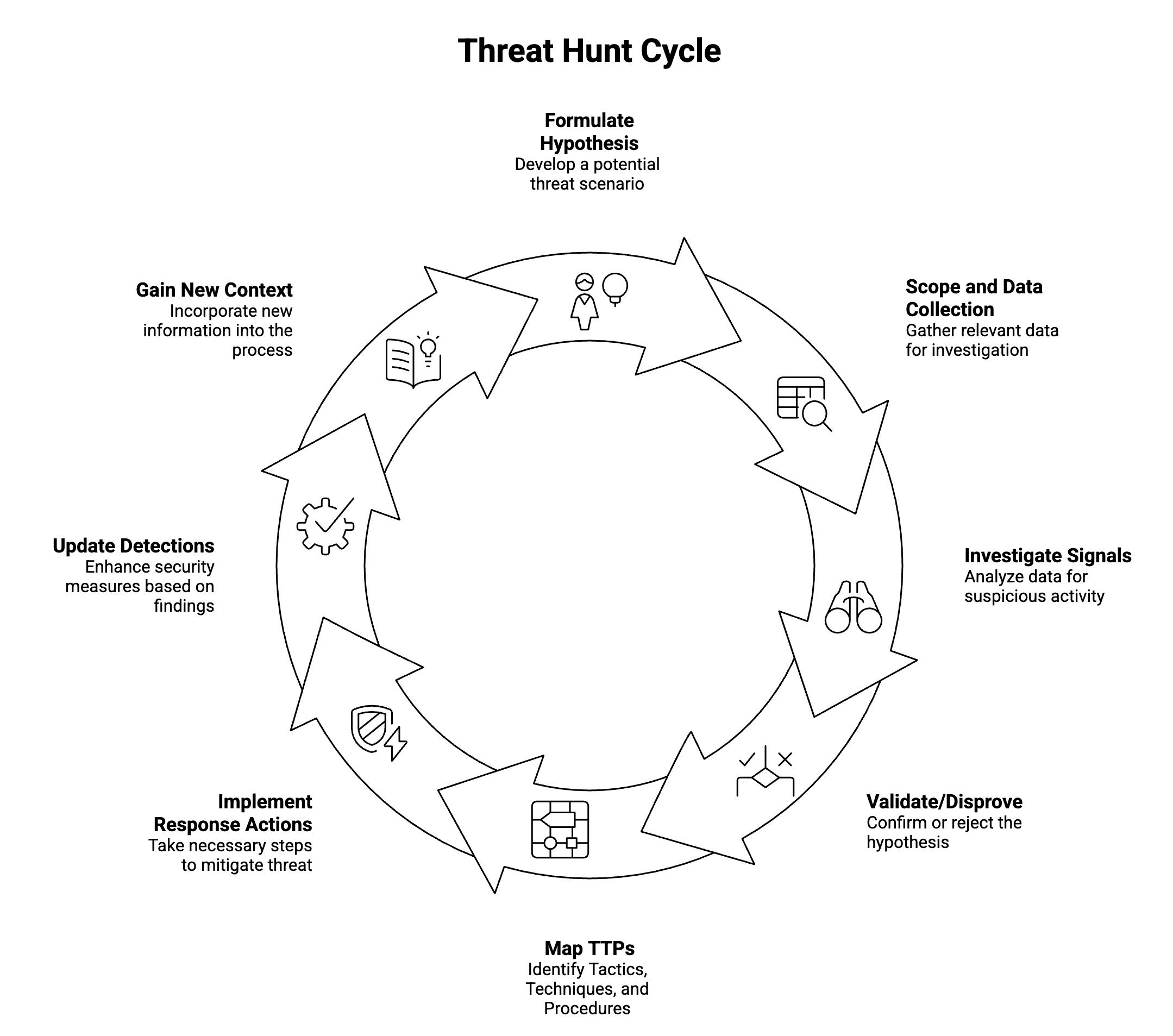

The core workflow of a threat hunt

A repeatable threat hunt workflow is a loop that goes from hypothesize to collect, investigate, decide, and improve.

The key is speed-to-clarity. If it takes days to investigate basic questions, hunts die on the vine, and teams revert to reacting to alerts.

How to run a threat hunt step by step

Use a single, consistent playbook so hunts are comparable and improvable over time.

- Start with a falsifiable hypothesis.

Example: “A service principal is being used from unusual geographies to access sensitive storage.” - Define scope and time window.

Pick a bounded set of identities, applications, repositories, or cloud projects. Decide what “normal” looks like for that population. - Collect the minimum evidence needed.

Pull identity logs, API activity, endpoint signals, and relevant configuration state. If you cannot access the context quickly, you will struggle to investigate confidently. - Investigate for behaviors and events.

Look for sequences, such as new credential creation followed by privileged access, or repo token creation followed by CI pipeline modification. - Decide and document.

Confirmed compromise, benign-but-risky behavior, or inconclusive. In each case, record what evidence would have made the investigation faster. - Turn outcomes into improvements.

Create or tune detections, update response playbooks, and refine access controls.

This is intentionally simple. The sophistication comes from your hypotheses and your ability to investigate efficiently across domains.

What to investigate during hunts

A practical threat hunt program focuses on categories that repeatedly produce findings and harden your posture, even when you disprove the hypothesis.

Identity and access abuse

Identity frequently functions as the control plane for cloud and SaaS environments, which makes it a high-value focal point for threat hunting. Hunts in this domain commonly examine suspicious OAuth application grants and anomalous consent patterns, as well as unusual token usage such as abnormal refresh behavior or impossible travel scenarios. They also assess privilege changes, newly assigned administrative roles, and role chaining that could indicate escalation or abuse of delegated access.

For a contemporary framework that aligns incident handling with structured analysis, NIST provides guidance on analyzing incident-related data and determining appropriate response actions. That guidance maps effectively to hunting workflows, particularly when translating investigative findings into containment, remediation, and long-term control improvements.

Cloud control plane activity

Cloud attacks frequently blend into activity that appears to be legitimate administrative behavior, which makes careful contextual analysis essential. Investigations should examine unusual IAM policy modifications, especially those that expand permissions or alter trust boundaries. They should also review the creation of new access keys, service accounts, or trust relationships that could enable persistence or cross-account access. In addition, analysts need to scrutinize data store access patterns that are anomalous relative to the identity’s historical behavior and the expected workload profile, since subtle deviations in access scope or timing often indicate credential misuse or privilege escalation.

SaaS and collaboration misuse

SaaS platforms are where critical business data resides and where attackers can most easily blend into normal user behavior. Effective hunts in these environments examine sharing permission changes, suspicious mailbox rule creation, unusual file download patterns, and access originating from unmanaged or previously unseen devices.

When evaluating platforms that claim to accelerate investigation and threat hunting, focus on whether they meaningfully reduce the time required to build context. The most capable approaches pre-link identity, cloud, and SaaS relationships so investigators can move from signal to impact assessment without manually stitching together logs from multiple systems. This concept of “fast context” is often the differentiator between a tool that stores data and one that materially improves investigative velocity.

Endpoint, lateral movement, and persistence

Classic adversary behaviors still matter, particularly when they are combined with cloud-based pivots that extend an intrusion across environments. Remote service creation remains a common mechanism for lateral movement, while precursors to credential dumping often signal an attempt to escalate privileges or expand access. Persistence mechanisms such as scheduled tasks, launch agents, or autorun entries continue to provide attackers with durable footholds that survive reboots and routine operational changes.

Mapping investigative findings to ATT&CK technique identifiers strengthens internal communication and operational alignment. By expressing results in terms of standardized technique IDs, security teams can more clearly articulate risk, identify detection coverage gaps, and prioritize engineering work to address the most meaningful weaknesses.

The difference between hunting and searching is data and context

Many teams are capable of querying logs, but far fewer can conduct high-confidence investigations that traverse systems and domains. Threat hunting begins to fail when identity representations are inconsistent across tools, when asset ownership and data sensitivity classifications are ambiguous, and when actions cannot be reliably correlated across SaaS platforms, cloud infrastructure, endpoints, and code repositories. It also degrades when retention policies and data schemas vary in ways that require analysts to manually normalize and stitch together records before meaningful analysis can begin.

For this reason, modern security programs prioritize curated and contextualized data foundations rather than raw log aggregation. Mature SOCs consistently learn that investigative effectiveness depends on the quality, normalization, and relational integrity of their data pipelines. Without the ability to connect identities, assets, actions, and timelines across systems, even experienced analysts cannot produce defensible conclusions.

How to structure a threat hunting program

You do not need a large team to begin threat hunting. Threat hunting done well requires operational discipline and the ability to investigate in a consistent, repeatable manner, no matter the team size.

A lightweight operating model is sufficient for many SOCs. The old practical cadence was one to two structured hunts per week per hunter, supplemented by ad hoc hunts triggered by new intelligence or active incidents. With an AI SOC, that number can realistically double to two to four threat hunts a week.

Establish a clear quality threshold in which every hunt produces a durable outcome, even if the initial hypothesis does not validate. Outcomes typically fall into four categories: a validated security finding, an addition to or improvement in detection logic, a documented data gap that becomes a tracked engineering task, or a formally closed hypothesis with documented negative results and captured analytical notes.

That final category is important. A dead end is still valuable when the investigative path, queries, assumptions, and conclusions are recorded. Over time, this institutionalizes analytical reasoning, prevents redundant effort, and sharpens future hypotheses by clarifying what normal truly looks like in your environment.

Reporting should include a quarterly review of time to investigate, recurrence of similar findings, and measurable detection lift so leadership can see operational impact rather than anecdotal activity.

Tooling decisions directly influence whether this model succeeds. If analysts spend most of their time normalizing inconsistent schemas and writing brittle, one-off queries, investigative throughput declines and organizational learning slows. When evaluating AI-driven SOC approaches, look for explanations that clarify how automation and agentic workflows accelerate correlation, enrichment, and hypothesis testing while preserving human analytical judgment at decision points.

Common pitfalls that derail threat hunt efforts

Most failed hunting programs fail for predictable reasons:

- No hypotheses, only wandering: “Let’s look around” is not a hunt.

- Unbounded scope: If everything is in scope, nothing is investigable.

- No closure loop: If outcomes do not feed detections and response, hunts become a side quest.

- Context debt: If basic questions take too long to investigate, momentum collapses.

For response readiness and coordination practices, the UK National Cyber Security Centre’s incident management guidance is a solid, non-vendor reference that complements hunt-to-response workflows.

What good looks like: a practical maturity checklist

A functional threat hunting program is hypothesis-driven and explicitly mapped to ATT&CK techniques, ensuring that investigative work aligns to known adversary behaviors rather than ad hoc curiosity. The team must be able to investigate identity, cloud, SaaS, and endpoint activity within a single coherent workflow so that cross-domain attack paths can be analyzed without fragmentation. Findings should consistently translate into tangible detection engineering improvements, reinforcing a feedback loop between investigation and prevention. Over time, the average time to investigate repeat hunt types should trend downward, demonstrating operational learning and improved data fluency.

If a program can reliably execute on these principles, its value compounds through faster investigations, stronger detections, and increasing organizational clarity about real risk.

Compounding security advantage through hunting

Threat hunting is the discipline of proactively investigating the gaps between what you log, what you alert on, and what attackers actually do. The strongest programs treat every hunt as a feedback loop: investigate quickly, decide confidently, and convert lessons into better detections and responses.

If you want to modernize how your team investigates and scales threat hunt coverage, consider running a structured evaluation of workflows and data foundations, then compare how quickly your SOC can investigate cross-domain hypotheses end to end. For teams exploring agentic approaches, an Exaforce demo or hands-on evaluation can be a low-friction way to benchmark investigation speed against your current process.