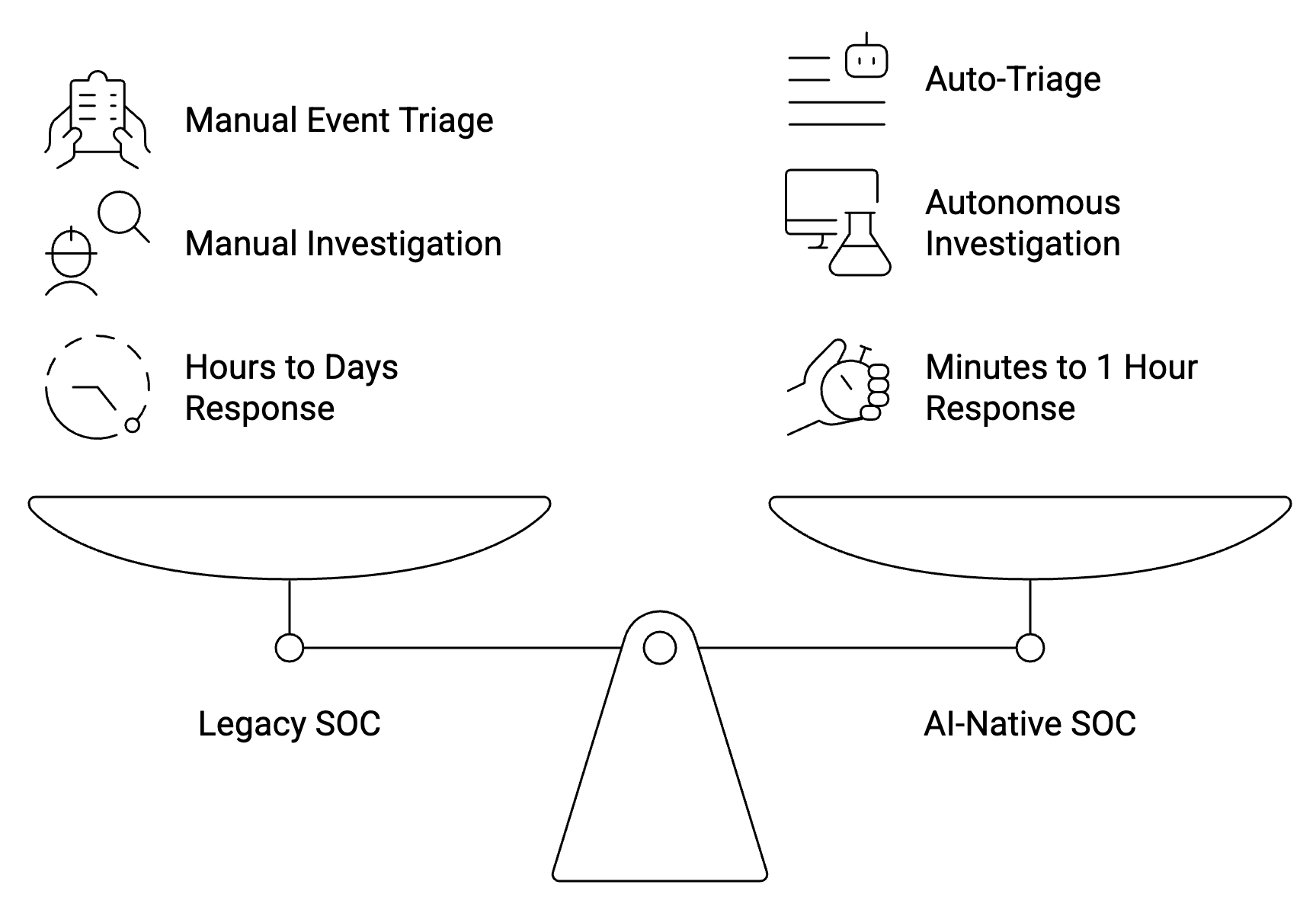

AI があれば、攻撃者は数分で動きます。ほとんどのセキュリティチームは今でも対応を数時間単位で測定しています。このギャップはアーキテクチャ上の問題です。ほとんどの組織が現在も運用しているセキュリティ運用モデルは、もはや存在しない脅威環境向けに設計されており、段階的な自動化を適用してもギャップは埋められません。

のコンセプト 人工知能 (AI) ネイティブ SOC これに直接対処します。従来のスタックに AI 機能を追加するのではなく、すべてのドメインにわたるテレメトリの取り込み、人間のアナリストが時間内に接続できなかった信号を相関付け、攻撃者が目的を達成する前に対応するなど、AI が実際にマシンスピードで実行できることを中心にセキュリティ運用を再構築します。2026年にセキュリティ投資の意思決定を行うすべての人にとって、それが実際に何を意味するのか、そして真にAIネイティブなアーキテクチャとリブランドとの違いを理解することが、セキュリティ投資の意思決定を行うすべての人にとってますます重要になっています。

「AI ネイティブ」の実際の意味

この用語は大まかに適用される。ベンダーは10年前のSIEMにジェネレーティブAIアシスタントを追加し、それを「AIネイティブ」と呼んでいます。そうではありません。

AI ネイティブ SOC とは、AI がアドオンではなく、主要な検出および対応エンジンであるSOCのことです。つまり、このプラットフォームは、ルールベースの検出の上にある機能レイヤーとしてではなく、機械学習モデル、大規模言語モデル、エージェントワークフローを中核的な運用レイヤーとして使用するようにゼロから構築されたということです。

このアーキテクチャが埋めようとする運用上のギャップは重大です。 IBMの「2024年のデータ漏えいのコスト」レポート セキュリティ業務にAIと自動化を導入している組織は、導入していない組織と比較して、侵害1件あたり平均220万ドルの節約につながることがわかりました。この数字は、対応速度と、横方向の移動やデータ流出が始まる前のキルチェーンの早い段階で脅威を捉えた場合の複合効果を反映しています。

AI ネイティブ SOC を定義する 4 つの機能

いつ AI SOC 機能の評価、真にAIネイティブのプラットフォームがカバーしなければならない4つの機能層について考えると役に立ちます。これらはマシンスピードの運用に必要な最低限のアーキテクチャです。

行動モデルに基づく独自の検出

従来のSIEMは、人間が記述した相関ルールに依存しています。これらのルールは決定論的です。イベント A とイベント B がタイムウィンドウ内に発生した場合は、アラートを起動します。このアプローチには 2 つの問題があります。ルールを作成するには既知の脅威パターンが存在している必要があり、ルールベースの検出を理解している攻撃者は、しきい値を下回るか、戦術を変更することで回避できます。

AIネイティブ検出では、代わりに行動ベースラインと自己学習モデルを使用します。システムは、特定の環境のノーマルがどのようなものかを学習し、既存のルールやシグネチャと一致しない新しい攻撃パターンを含む逸脱を明らかにします。 MITRE ATT&CK のカバレッジギャップ分析 実際の侵害で最も頻繁に使用される手法は、シグネチャベースのツールではほとんどカバーされていない手法であることが一貫して示されています。行動検知は、既知の不正リストとの一致ではなく、行動の異常を探すため、このギャップのかなりの部分を埋めます。

大規模な自動トリアージ

アラート量は、ほとんどの SOC チームの効果的な運営を妨げる実際的な障壁です。一般的なエンタープライズ SOC は 1 日あたり数万件のアラートを受信します。Tier 1 のアナリストは、どのアラートを調査すべきかを判断するトリアージに大半の時間を費やしています。調査の結果、これらのアラートの 40 ~ 60% が誤検知であることが一貫して示されています。

AIネイティブのトリアージは、アラートを自動的にスコアリングしてコンテキスト化することで、この状況を変えます。システムはフラットキューを提示するのではなく、未処理のイベントをインシデントに関連づけ、各インシデントにアセットのコンテキスト、脅威インテリジェンス、履歴パターンを付加して、実際に注意が必要なものについて優先順位を付けたコンテキスト化されたビューをアナリストに提供します。

トリアージレイヤーでは、エスカレーションされるものと自動的にクローズされるものも決まります。成熟した AI SOC プラットフォームでは、アナリストの関与なしに、信頼性が低くリスクの低いアラートの大部分をクローズできるため、チームは判断が必要なインシデントに集中できるようになります。

詳細かつ自律的な調査

トリアージによってアラートが表示されます。調査の結果、実際に何が起こったのかがわかります。従来のSOCでは、調査は手動で行われ、アナリストがログを取得し、さまざまなツールを巡回し、タイムラインを構築し、結果を書き留めます。このプロセスには時間がかかり、多くの場合、攻撃者が必要とする以上の時間がかかります。

AIネイティブの調査レイヤーがこれを自動化します。アラートがトリアージの閾値を超えると、プラットフォームが自律的に調査を行います。たとえば、テレメトリソース全体へのクエリ、ラテラルムーブメントの追跡、アクティビティのMITRE ATT&CKフレームワークへのマッピング、影響を受けるアセットの特定、構造化されたインシデント・ナラティブの生成などです。アナリストは、何時間もかけて手動で行うピボット作業の出発点ではなく、全体像を把握できます。

ここは SOC におけるエージェント AI 意味のあるものになります。エージェント型ワークフローとは、AI システムが各ステップで人間の指示を待たずに、複数のデータソースやツールにわたって多段階のアクションを実行できることを意味します。調査スレッドを最後まで進めてから、推奨される対応アクションを含む調査結果を人間のアナリストに提示してレビューしてもらうことができます。

自動化されたヒューマン・イン・ザ・ループ・レスポンス

アーキテクチャの選択がセキュリティに最も直接的な影響を与えるのは応答です。AI ネイティブの SOC では、信頼性が高くリスクの低いアクションには自動対応が必要で、影響範囲が広いアクションには体系化されたヒューマンインザループレビューの両方が必要です。

完全に自動化された対応は、エンドポイントの隔離、疑わしいIPのブロック、侵害されたアカウントの無効化など、よく理解されていて元に戻せる封じ込めアクションに適しています。 NIST CSF 2.0の「レスポンス」機能 特に、すべてのステップで手動承認を必要とせずに実行できる、事前定義された対応プレイブックの必要性を強調しています。

ヒューマンインザループレビューは、ネットワークセグメンテーションの変更、アカウントの削除、ポリシーの変更など、影響の大きい可能性のあるアクションに適用する必要があります。目標は、すべてに人間の承認を必要とすることであり、これでは速度面での利点が損なわれるのではなく、信頼度や潜在的な影響に基づいて、意思決定を適切な階層に自動的に振り分けることです。 AI を活用した検出ツール 自動実行とワンクリックによる人間による承認の両方を1つのインターフェースでサポートすることで、アナリストの認知的負担が大幅に軽減されます。特に、攻撃が集中している時間帯では特にそうです。

「AI統合」が「AIネイティブ」と異なる理由

この区別は、プラットフォームを評価する際に重要です。AI が統合されたツールは、アラートを要約するジェネレーティブ AI アシスタント、既知のアラートタイプをスコアリングする機械学習モデル、ログデータをクエリするための自然言語インターフェイスなど、AI 機能を既存のアーキテクチャに追加します。これらは便利な機能です。これらは AI ネイティブアーキテクチャを構成するものではありません。

決定がどこで行われるかが問題なのです。AI 統合システムでは、SIEM の相関エンジンが依然として主要な検出メカニズムです。人工知能は人間のアナリストを補う最優先の役割を担っています。AI ネイティブ・システムでは、AI モデルが主要な検出および調査エンジンであり、基礎となる分析を自分で行うのではなく、人間がアウトプットをレビューします。

このアーキテクチャの違いは、運用上の影響をさらに悪化させます。AI が統合された SIEM では、依然として手動による優先順位付けが必要なアラートキューが生成されます。AI ネイティブのプラットフォームでは、事前に調査された一連のインシデントにキューを減らすことができます。AI が統合されたシステムは調査をスピードアップしますが、実行にはやはりアナリストが必要です。AI ネイティブプラットフォームが自律的に調査を完了し、調査結果を提示します。

この違いはスケーラビリティにとっても重要です。アラートの量は環境とともに増加します。各ステップで人的労力を必要とするシステムは、ビジネスに合わせて拡張することはできません。 インジェストとトリアージをネイティブに処理する AI SOC アーキテクチャ アナリストの人員を増やすことなく、より多くのテレメトリを取り込むことができます。

AI ネイティブ SOC におけるアナリストの役割

一貫して懸念されるのは、AIネイティブのSoCがアナリストを排除するように設計されているかどうかです。実際には、その逆が真実に近いのです。

ほとんどのSOCのボトルネックは、興味深い脅威を調査する意欲のあるアナリストが不足していることではありません。これはノイズとシグナルの比率です。アナリストはほとんどの時間を階層 1 のトリアージに費やしていますが、有益なセキュリティ成果は得られません。これはAIネイティブな自動化によって排除される作業であり、上級アナリストが得意とする調査、判断、脅威ハンティングではありません。

変化するのはアナリストの業務環境です。アナリストは、未処理のアラートを順番に処理する代わりに、事前に調査したインシデントを完全なコンテキストでレビューします。作業はより分析的になり、機械的な作業は少なくなります。検出エンジニアリングは、ルールの記述から、モデルの動作の検証としきい値の調整へと移行します。脅威ハンティングは、手動のログクエリから AI による監視による異常行動の調査へと移行します。

その結果、移行が適切に管理されれば、同じ数のアナリストが、経験豊富なアナリストが高率で離職する組織ではあまり議論されていないセキュリティリスクの 1 つである離職率を高める原因となるバーンアウトが発生することなく、大幅に大量のアラートを処理できるようになります。

環境に適したアーキテクチャの選択

すべての組織が同じ出発点を持っているわけではありません。成熟した SOC プログラムや大規模なアナリストチームを持つ企業と、セキュリティスタッフが限られている中堅企業のニーズは異なります。評価基準は異なりますが、核となる質問は同じです。

プラットフォームは特定のテレメトリソースの検出範囲をどのように処理しますか?クラウド、エンドポイント、ID、ネットワークのデータを統合モデルに取り込むことができるのか、それともドメイン間の相関関係を手動で設定する必要があるのか。トリアージ出力は実際にはどのようなものでしょうか。アナリストはまだアラートキューを確認しているのか、それとも調査対象のインシデントをレビューしているのか。自動対応アクションの範囲設定、承認、監査はどのように行われているか?

これらの質問への回答は、どのマーケティングポジショニングよりも確実に、AIネイティブプラットフォームとAI拡張プラットフォームを区別します。プラットフォームに取り掛かる前に、サニタイズされたデモデータではなく、実際の環境のテレメトリを使用して概念実証を行うことは、投資する価値があります。

成熟した AI ネイティブ SOC に期待できること

アーキテクチャの転換を行った組織は、一貫したパターンを報告しています。アラートの量は増え続けており、環境が拡大しても変化しませんが、アナリストがトリアージに費やす時間は大幅に減少しています。MTTD と MTTR はどちらも圧縮されるため、攻撃者の滞留時間が直接短縮されます。ルールベースのシステムでは見逃す脅威が行動モデルによって明らかになるため、検出範囲が広がります。

より重要な変更は構造です。調査が自動化されれば、シフトやアナリストの経験、アラート量の急増にかかわらず、SOC は同じ品質レベルで継続的に運用できます。大規模な攻撃を受けたレガシー SOC は、人間が圧倒されてしまうため、パフォーマンスが低下します。同じ条件下の AI ネイティブ SOC の方が処理効率が良く、人間の判断を必要とするインシデントだけがエスカレーションされます。

これは、従来の運用と比べてわずかな改善ではありません。これはセキュリティの仕組みの異なるモデルであり、2026年の脅威環境ではますます必要性が高まっています。